Magic Chef

An interactive cookbook experience

Magic Chef is a web application developed as part of a high-fidelity prototype, functioning as a cooking assistant that helps users choose a recipe to cook and guides them through the cooking process. The project integrates sensor-based input to create an engaging and enchanting experience, all embedded within the form of a physical cookbook.

The concept reimagines how people interact with recipes by combining physical interactions, such as ingredient scanning and wand gestures, with a screen-based assistant. The aim is to make cooking more magical, intuitive, enjoyable, and user-centred, particularly for novice or casual cooks who may feel overwhelmed by traditional recipe formats or struggle to decide what to prepare.

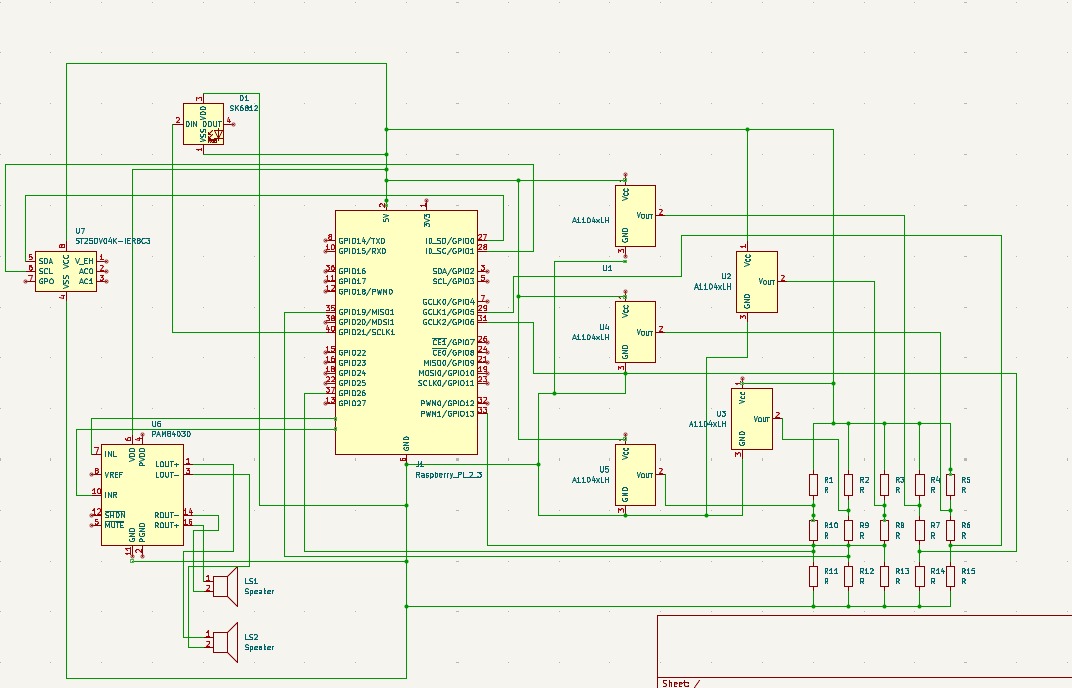

The left-hand 'page' of the book contains a 'magic circle': a wooden platform embedded with a circular RFID scanner, surrounded by Hall effect sensors disguised as buttons, and encircled by an LED ring that responds to different ingredients. These sensors are controlled using a magnetic wand, enabling users to navigate the interface without physically touching the screen. When an RFID-tagged ingredient is placed on the platform, the application recognises it and recommends personalised recipes. On the right-hand 'page', a 7-inch touchscreen displays the web application, powered by a Raspberry Pi running the complete software stack.

The software architecture includes a Python-based backend using FastAPI, a frontend built with Next.js, and sensor scripts operating on the Raspberry Pi. The backend exposes both a REST API for serving recipe data from an SQLite database and a WebSocket server for real-time updates. Sensor input, including RFID scans and Hall sensor triggers, is published via MQTT to a cloud broker, from which it is relayed to the frontend for seamless interaction. The application supports two distinct cooking modes: a guided mode and a free cooking mode, each with its own approach to assisting the user during meal selection and preparation.

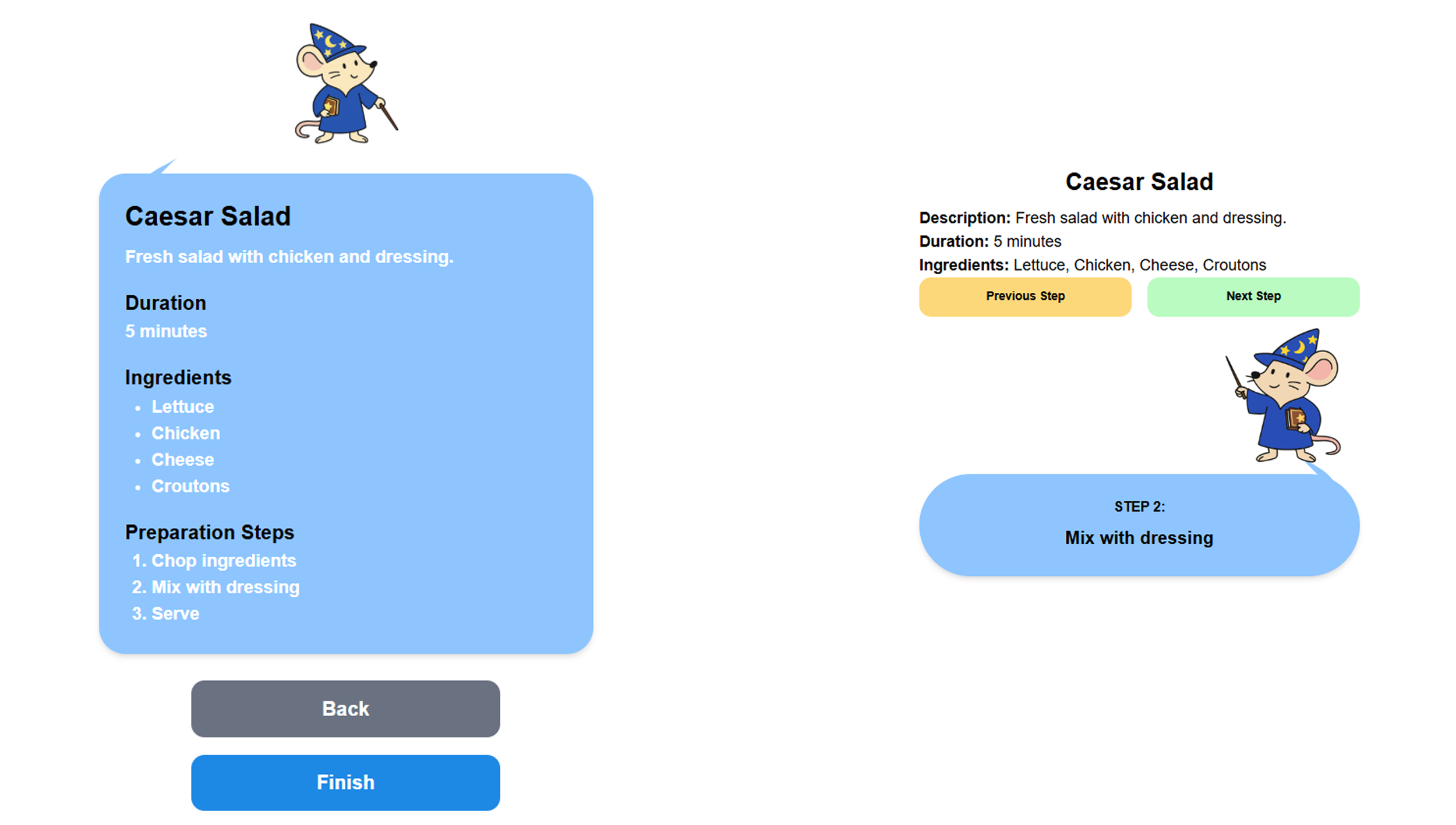

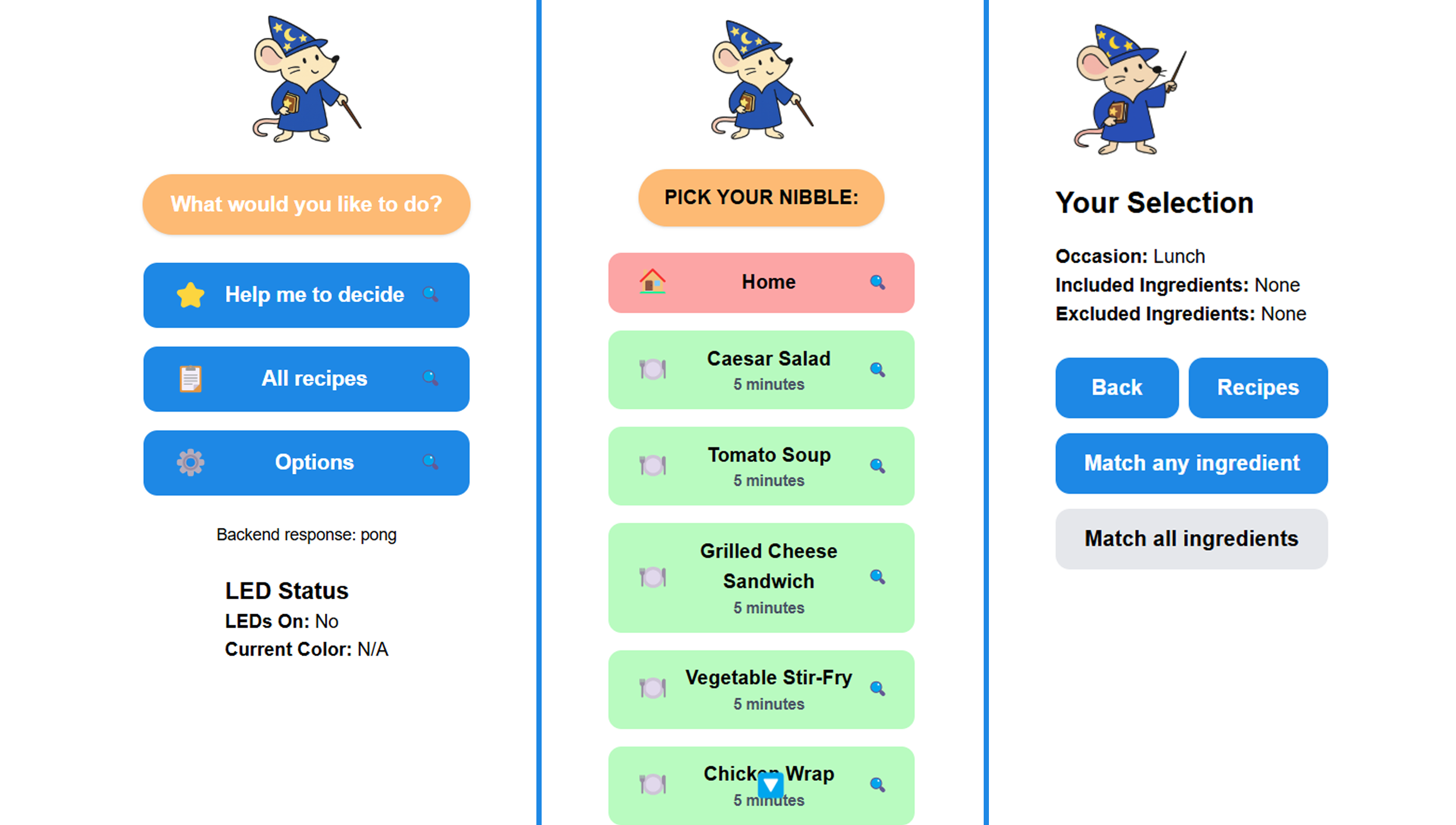

The frontend is organised into three main sections. The Help Me Decide view helps users refine recipe options based on dietary preferences. The All Recipes view provides the user a complete overview of the available recipes stored in the application's database. The Options view allows users to modify preferences related to the application and enable accessibility features. Interaction is flexible, allowing users to engage with the application via touchscreen, wand-based gestures, or ingredient scanning. Upon selecting a recipe, users can proceed in the free cooking mode, which displays all instructions at once, or in the guided cooking mode, which guides them through each step.

The physical prototype is meticulously constructed using laser-cut wood, stained in a rich crimson and engraved with golden accents to evoke a mystical aesthetic. Internally, the cookbook houses the electronics, sensors, and wiring that are necessary for interaction.

A user study involving ten participants was conducted to evaluate the prototype. Participants compared the gesture-based controls to direct touchscreen input. While gesture navigation was perceived as more novel and enjoyable, it was less efficient. Overall, the system received positive feedback, validating Magic Chef as an engaging and unique interface for recipe exploration and cooking assistance.

Core features and design considerations

- Unique interaction: A physical book embedded with sensors creates an immersive and intuitive cooking experience.

- Magnetic wand and Hall sensor Navigation: Users navigate the app using a wand with a magnetised tip and embedded Hall effect sensors.

- Ingredient-driven recipe filtering: Ingredients tagged with RFID chips trigger context-aware suggestions for matching recipes on the screen.

- Dual cooking modes: Users can choose between a free cooking mode (overview) and a guided cooking mode (step-by-step with assistant guidance).

- Frontend architecture (Next.js): The web interface is designed for usability and enchantment and supports sensor-based and traditional input methods.

- Backend architecture (FastAPI): The application manages MQTT subscriptions, WebSocket communications, and recipe data delivery in real time.

- Sensor integration with MQTT: All sensor data is transmitted via HiveMQ and parsed by the backend, enabling seamless and robust data flow.

Magic Chef demonstrates how technology can be thoughtfully embedded into familiar forms to create enchanting and meaningful user experiences. It blends embedded systems, sensor technologies, and web development to offer an alternative way of interacting with information. The project showcases how working across various disciplines, software engineering, hardware prototyping, designing user-centred systems, and applying HCI theories to real-world applications can create unique user experiences.